Given there’s a lot of talk about containerisation in the applications marketplace at the moment, this post is intended to provide a light introduction to the subject with a few pointers on security.

Containers

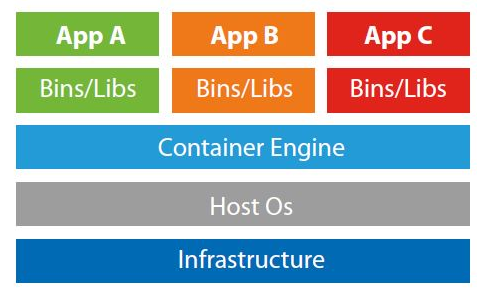

A container can be considered a logically discrete compute segment performing application functions. The architecture of containers abstracts the application environment from the operating system such that application resources can run within the container. When we discuss containers, we’re really talking about micro system images which are configured to perform application services. Containers can operate stand-alone, and they can operate in clusters.

The image below provides more insight into how these operate:

Image from Serverspace.com

Micro-segmentation

The above demonstrates that each application is logically independent and partitioned using dedicated binary and library files. Micro-segmentation is a mechanism which facilitates firewalling between application containers such that only specific and required ports and protocols are permitted. This can allow the Container Engine to communicate with App A but prevent App A communicating with App B. Similarly, the communication permitted between containers can be customised to the source and destination much as you would firewall between two hosts on a network.

Containerisation allows us to run a large estate of application servers across a smaller number of operating system hosts. This allows for a smaller platform environment thereby allowing the business focus on the delivery of functionality at the application layer.

Popular container management systems at present are Windows Containers, Docker Swarm and Kubernetes (the Google Compute Engine is managed by Kubernetes).

Container Images

An image is a system file which, when run, operates as a container and can be used to run application services or other programmable functions. The docker image can be a full OS image or can share system files with the host container. For example, you could run an Ubuntu image on a Windows host or on an Ubuntu host. In the latter case, the image could share the host kernel and be more lightweight. If using Docker, we can create an image from Docker’s repository then run the image as a container and load an application to the container such that we have a running application running in a container on our host.

Container Security

As these images are generally immutable (read-only images), we need to verify the integrity of the ‘golden image’. The base image should be hardened and minimally configured to provide required functionality as well as including security controls to provide visibility of security events.

The most recently approved image can be timestamped and deployed to an image directory. DevOps configurations can identify the new image and enable staggered auto-deployment of this image to production systems.

We can consider the following controls to be applicable:

- Access control to the image configuration system

- Change control for image management

- Peer review of changes

- Vulnerability testing

- Image approval and integrity checking (checksum)

- Change control for image deployment

- Configuration which does not permit access to the system

- Configuration which offloads security relevant events to a separate server

Read More:

An Introduction to DevOps

The "Key" to Secure Data - P2PE - Derived Unique Key Per Transaction